MCA Microsoft Azure Data Engineer Training & Certification Boot Camp – 5 Days (2 Courses, 2 Exams, 2 Certs)

Training Schedule and Pricing

Our training model blends knowledge and certification prep into one solution. Interact face-to-face with vendor certified trainers AT OUR TRAINING CENTER IN SARASOTA, FL - OR - attend the same instructor-led live camp ONLINE.

-

Apr292024Delivery Format:CLASSROOM LIVEDate:04.29.2024 - 05.03.2024Location:SARASOTAPrice Includes:Instructor Led Class, Official Courseware, Labs and Exams$3,9955 days

-

May272024Delivery Format:CLASSROOM LIVEDate:05.27.2024 - 05.31.2024Location:SARASOTAPrice Includes:Instructor Led Class, Official Courseware, Labs and Exams$3,9955 days

-

Jun242024Delivery Format:CLASSROOM LIVEDate:06.24.2024 - 06.28.2024Location:SARASOTAPrice Includes:Instructor Led Class, Official Courseware, Labs and Exams$3,9955 days

What's Included

2 Microsoft Test Vouchers

2 Microsoft Official Courses

1 Retake Voucher (per exam, if needed)

Microsoft Study Labs & Simulations

Onsite Pearson Vue Test Center

Instructor Led Classroom Training

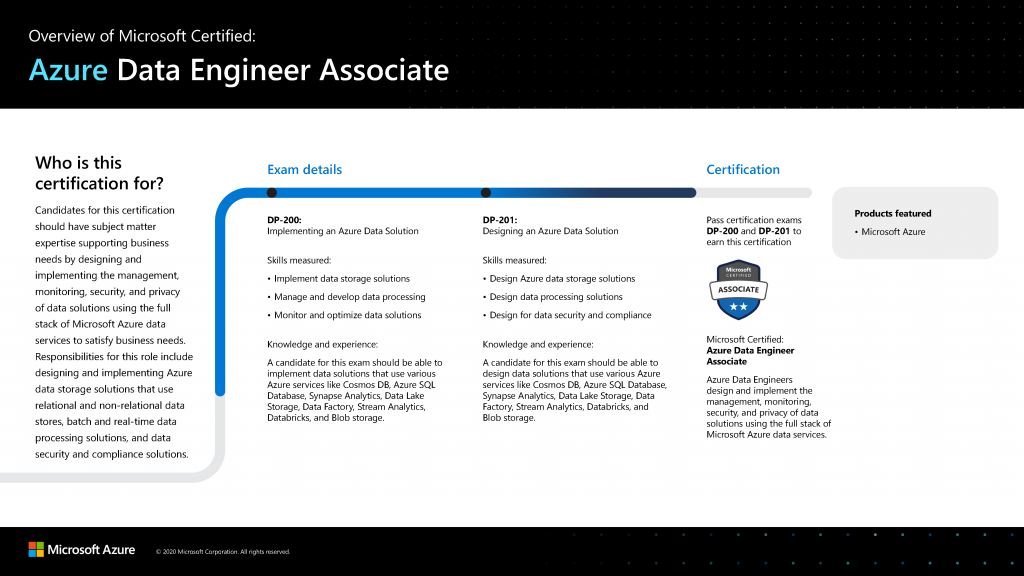

The NEW Microsoft Azure Data Engineer Training & Certification 5 Day Boot Camp focuses on Azure Data Engineers needs to design and implement the management, monitoring, security, and privacy of data using the full stack of Azure data services to satisfy business needs.

The Microsoft Azure Data Engineer Associate Boot Camp is taught using TWO Microsoft Official Courses

DP-900T00: Microsoft Azure Data Fundamentals

DP-203T00: Designing an Azure Data Solution

While attending this 5 day instructor led live camp - students will take 2 exams (DP-203 - Replaces DP-200/ DP-201) - AND - DP-900 to achieve the Microsoft Certified Foundations and Associate certifications. This hands on, instructor led live camp focuses on the real world responsibilities of an Azure Data Engineer covering the information needed for the certification exams which are administered while attending.

Skills Gained:

Describe core data concepts in Azure

Explain concepts of relational data in Azure

Explain concepts of non-relational data in Azure

Identify components of a modern data warehouse in Azure

Explore compute and storage options for data engineering workloads in Azure

Run interactive queries using serverless SQL pools

Perform data Exploration and Transformation in Azure Databricks

Explore, transform, and load data into the Data Warehouse using Apache Spark

Ingest and load Data into the Data Warehouse

Transform Data with Azure Data Factory or Azure Synapse Pipelines

Integrate Data from Notebooks with Azure Data Factory or Azure Synapse Pipelines

Support Hybrid Transactional Analytical Processing (HTAP) with Azure Synapse Link

Perform end-to-end security with Azure Synapse Analytics

Perform real-time Stream Processing with Stream Analytics

Create a Stream Processing Solution with Event Hubs and Azure Databricks

Topics Covered in this Official Boot Camp

Explore core data concepts

Students will learn the fundamentals of database concepts in a cloud environment, get basic skilling in cloud data services, and build their foundational knowledge of cloud data services within Microsoft Azure. Students will identify and describe core data concepts such as relational, non-relational, big data, and analytics, and explore how this technology is implemented with Azure. Students will explore the roles, tasks, and responsibilities in the world of data.Lessons

- Explore core data concepts

- Explore roles and responsiblities in the world of data

- Describe concepts of relational data

- Explore concepts of non-relational data

- Explore concepts of data analytics

After completing this module, students will be able to:

- Show foundational knowledge of cloud data services within Azure

- Identify and describe core data concepts such as relational, non-relational, big data, and analytics

- Explain how this technology is implemented with Azure

Explore relational data in Azure

Students will learn the fundamentals of database concepts in a cloud environment, get basic skilling in cloud data services, and build their foundational knowledge of cloud data services within Microsoft Azure. Students will explore relational data offerings, provisioning and deploying relational databases, and querying relational data through cloud data solutions with Azure.Lessons

- Explore relational data services in Azure

- Explore provisioning and deploying relational database services in Azure

- Query relational data in Azure

After completing this module, students will be able to:

- Describe relational data services on Azure

- Explain provisioning and deploying relational databases on Azure

- Query relational data through cloud data solutions in Azure

Explore non-relational data in Azure

Students will learn the fundamentals of database concepts in a cloud environment, get basic skilling in cloud data services, and build their foundational knowledge of cloud data services within Azure. Students will explore non-relational data services, provisioning and deploying non-relational databases, and non-relational data stores with Microsoft Azure.Lessons

- Explore non-relational data services in Azure

- Explore provisioning and deploying non-relational data services on Azure

- Manage non-relational data stores in Azure

After completing this module, students will be able to:

- Describe non-relational data services on Azure

- Explain provisioning and deploying non-relational databases on Azure

- Decribe non-relational data stores on Azure

Explore modern data warehouse analytics in Azure

Students will learn the fundamentals of database concepts in a cloud environment, get basic skilling in cloud data services, and build their foundational knowledge of cloud data services within Azure. Students will explore the processing options available for building data analytics solutions in Azure. Students will explore Azure Synapse Analytics, Azure Databricks, and Azure HDInsight. Students will learn what Power BI is, including its building blocks and how they work together.Lessons

- Examine components of a modern data warehouse

- Explore data ingestion in Azure

- Explore data storage and processing in Azure

- Get started building with Power BI

After completing this module, students will be able to:

- Describe processing options available for building data analytics solutions in Azure

- Describe Azure Synapse Analytics, Azure Databricks, and Azure HDInsight

- Explain what Microsoft Power BI is, including its building blocks and how they work together

Explore compute and storage options for data engineering workloads

Module 1: Explore compute and storage options for data engineering workloadsThis module provides an overview of the Azure compute and storage technology options that are available to data engineers building analytical workloads. This module teaches ways to structure the data lake, and to optimize the files for exploration, streaming, and batch workloads. The student will learn how to organize the data lake into levels of data refinement as they transform files through batch and stream processing. Then they will learn how to create indexes on their datasets, such as CSV, JSON, and Parquet files, and use them for potential query and workload acceleration.Lessons

- Introduction to Azure Synapse Analytics

- Describe Azure Databricks

- Introduction to Azure Data Lake storage

- Describe Delta Lake architecture

- Work with data streams by using Azure Stream Analytics

Lab : Explore compute and storage options for data engineering workloads

- Combine streaming and batch processing with a single pipeline

- Organize the data lake into levels of file transformation

- Index data lake storage for query and workload acceleration

After completing this module, students will be able to:

- Describe Azure Synapse Analytics

- Describe Azure Databricks

- Describe Azure Data Lake storage

- Describe Delta Lake architecture

- Describe Azure Stream Analytics

Run interactive queries using Azure Synapse Analytics serverless SQL pools

In this module, students will learn how to work with files stored in the data lake and external file sources, through T-SQL statements executed by a serverless SQL pool in Azure Synapse Analytics. Students will query Parquet files stored in a data lake, as well as CSV files stored in an external data store. Next, they will create Azure Active Directory security groups and enforce access to files in the data lake through Role-Based Access Control (RBAC) and Access Control Lists (ACLs).Lessons

- Explore Azure Synapse serverless SQL pools capabilities

- Query data in the lake using Azure Synapse serverless SQL pools

- Create metadata objects in Azure Synapse serverless SQL pools

- Secure data and manage users in Azure Synapse serverless SQL pools

Lab : Run interactive queries using serverless SQL pools

- Query Parquet data with serverless SQL pools

- Create external tables for Parquet and CSV files

- Create views with serverless SQL pools

- Secure access to data in a data lake when using serverless SQL pools

- Configure data lake security using Role-Based Access Control (RBAC) and Access Control List

After completing this module, students will be able to:

- Understand Azure Synapse serverless SQL pools capabilities

- Query data in the lake using Azure Synapse serverless SQL pools

- Create metadata objects in Azure Synapse serverless SQL pools

- Secure data and manage users in Azure Synapse serverless SQL pools

Data exploration and transformation in Azure Databricks

This module teaches how to use various Apache Spark DataFrame methods to explore and transform data in Azure Databricks. The student will learn how to perform standard DataFrame methods to explore and transform data. They will also learn how to perform more advanced tasks, such as removing duplicate data, manipulate date/time values, rename columns, and aggregate data.Lessons

- Describe Azure Databricks

- Read and write data in Azure Databricks

- Work with DataFrames in Azure Databricks

- Work with DataFrames advanced methods in Azure Databricks

Lab : Data Exploration and Transformation in Azure Databricks

- Use DataFrames in Azure Databricks to explore and filter data

- Cache a DataFrame for faster subsequent queries

- Remove duplicate data

- Manipulate date/time values

- Remove and rename DataFrame columns

- Aggregate data stored in a DataFrame

After completing this module, students will be able to:

- Describe Azure Databricks

- Read and write data in Azure Databricks

- Work with DataFrames in Azure Databricks

- Work with DataFrames advanced methods in Azure Databricks

Explore, transform, and load data into the Data Warehouse using Apache Spark

This module teaches how to explore data stored in a data lake, transform the data, and load data into a relational data store. The student will explore Parquet and JSON files and use techniques to query and transform JSON files with hierarchical structures. Then the student will use Apache Spark to load data into the data warehouse and join Parquet data in the data lake with data in the dedicated SQL pool.Lessons

- Understand big data engineering with Apache Spark in Azure Synapse Analytics

- Ingest data with Apache Spark notebooks in Azure Synapse Analytics

- Transform data with DataFrames in Apache Spark Pools in Azure Synapse Analytics

- Integrate SQL and Apache Spark pools in Azure Synapse Analytics

Lab : Explore, transform, and load data into the Data Warehouse using Apache Spark

- Perform Data Exploration in Synapse Studio

- Ingest data with Spark notebooks in Azure Synapse Analytics

- Transform data with DataFrames in Spark pools in Azure Synapse Analytics

- Integrate SQL and Spark pools in Azure Synapse Analytics

After completing this module, students will be able to:

- Describe big data engineering with Apache Spark in Azure Synapse Analytics

- Ingest data with Apache Spark notebooks in Azure Synapse Analytics

- Transform data with DataFrames in Apache Spark Pools in Azure Synapse Analytics

- Integrate SQL and Apache Spark pools in Azure Synapse Analytics

Ingest and load data into the data warehouse

This module teaches students how to ingest data into the data warehouse through T-SQL scripts and Synapse Analytics integration pipelines. The student will learn how to load data into Synapse dedicated SQL pools with PolyBase and COPY using T-SQL. The student will also learn how to use workload management along with a Copy activity in a Azure Synapse pipeline for petabyte-scale data ingestion.Lessons

- Use data loading best practices in Azure Synapse Analytics

- Petabyte-scale ingestion with Azure Data Factory

Lab : Ingest and load Data into the Data Warehouse

- Perform petabyte-scale ingestion with Azure Synapse Pipelines

- Import data with PolyBase and COPY using T-SQL

- Use data loading best practices in Azure Synapse Analytics

After completing this module, students will be able to:

- Use data loading best practices in Azure Synapse Analytics

- Petabyte-scale ingestion with Azure Data Factory

Transform data with Azure Data Factory or Azure Synapse Pipelines

This module teaches students how to build data integration pipelines to ingest from multiple data sources, transform data using mapping data flowss, and perform data movement into one or more data sinks.Lessons

- Data integration with Azure Data Factory or Azure Synapse Pipelines

- Code-free transformation at scale with Azure Data Factory or Azure Synapse Pipelines

Lab : Transform Data with Azure Data Factory or Azure Synapse Pipelines

- Execute code-free transformations at scale with Azure Synapse Pipelines

- Create data pipeline to import poorly formatted CSV files

- Create Mapping Data Flows

After completing this module, students will be able to:

- Perform data integration with Azure Data Factory

- Perform code-free transformation at scale with Azure Data Factory

Orchestrate data movement and transformation in Azure Synapse Pipelines

In this module, you will learn how to create linked services, and orchestrate data movement and transformation using notebooks in Azure Synapse Pipelines.Lessons

- Orchestrate data movement and transformation in Azure Data Factory

Lab : Orchestrate data movement and transformation in Azure Synapse Pipelines

- Integrate Data from Notebooks with Azure Data Factory or Azure Synapse Pipelines

After completing this module, students will be able to:

- Orchestrate data movement and transformation in Azure Synapse Pipelines

End-to-End security with Azure Synapse Analytics

In this module, students will learn how to secure a Synapse Analytics workspace and its supporting infrastructure. The student will observe the SQL Active Directory Admin, manage IP firewall rules, manage secrets with Azure Key Vault and access those secrets through a Key Vault linked service and pipeline activities. The student will understand how to implement column-level security, row-level security, and dynamic data masking when using dedicated SQL pools.Lessons

- Secure a data warehouse in Azure Synapse Analytics

- Configure and manage secrets in Azure Key Vault

- Implement compliance controls for sensitive data

Lab : End-to-end security with Azure Synapse Analytics

- Secure Azure Synapse Analytics supporting infrastructure

- Secure the Azure Synapse Analytics workspace and managed services

- Secure Azure Synapse Analytics workspace data

After completing this module, students will be able to:

- Secure a data warehouse in Azure Synapse Analytics

- Configure and manage secrets in Azure Key Vault

- Implement compliance controls for sensitive data

Support Hybrid Transactional Analytical Processing (HTAP) with Azure Synapse Link

In this module, students will learn how Azure Synapse Link enables seamless connectivity of an Azure Cosmos DB account to a Synapse workspace. The student will understand how to enable and configure Synapse link, then how to query the Azure Cosmos DB analytical store using Apache Spark and SQL serverless.Lessons

- Design hybrid transactional and analytical processing using Azure Synapse Analytics

- Configure Azure Synapse Link with Azure Cosmos DB

- Query Azure Cosmos DB with Apache Spark pools

- Query Azure Cosmos DB with serverless SQL pools

Lab : Support Hybrid Transactional Analytical Processing (HTAP) with Azure Synapse Link

- Configure Azure Synapse Link with Azure Cosmos DB

- Query Azure Cosmos DB with Apache Spark for Synapse Analytics

- Query Azure Cosmos DB with serverless SQL pool for Azure Synapse Analytics

After completing this module, students will be able to:

- Design hybrid transactional and analytical processing using Azure Synapse Analytics

- Configure Azure Synapse Link with Azure Cosmos DB

- Query Azure Cosmos DB with Apache Spark for Azure Synapse Analytics

- Query Azure Cosmos DB with SQL serverless for Azure Synapse Analytics

Real-time Stream Processing with Stream Analytics

In this module, students will learn how to process streaming data with Azure Stream Analytics. The student will ingest vehicle telemetry data into Event Hubs, then process that data in real time, using various windowing functions in Azure Stream Analytics. They will output the data to Azure Synapse Analytics. Finally, the student will learn how to scale the Stream Analytics job to increase throughput.Lessons

- Enable reliable messaging for Big Data applications using Azure Event Hubs

- Work with data streams by using Azure Stream Analytics

- Ingest data streams with Azure Stream Analytics

Lab : Real-time Stream Processing with Stream Analytics

- Use Stream Analytics to process real-time data from Event Hubs

- Use Stream Analytics windowing functions to build aggregates and output to Synapse Analytics

- Scale the Azure Stream Analytics job to increase throughput through partitioning

- Repartition the stream input to optimize parallelization

After completing this module, students will be able to:

- Enable reliable messaging for Big Data applications using Azure Event Hubs

- Work with data streams by using Azure Stream Analytics

- Ingest data streams with Azure Stream Analytics

Create a Stream Processing solution with Event Hubs and Azure Databricks

In this module, students will learn how to ingest and process streaming data at scale with Event Hubs and Spark Structured Streaming in Azure Databricks. The student will learn the key features and uses of Structured Streaming. The student will implement sliding windows to aggregate over chunks of data and apply watermarking to remove stale data. Finally, the student will connect to Event Hubs to read and write streams.Lessons

- Process streaming data with Azure Databricks structured streaming

Lab : Create a Stream Processing Solution with Event Hubs and Azure Databricks

- Explore key features and uses of Structured Streaming

- Stream data from a file and write it out to a distributed file system

- Use sliding windows to aggregate over chunks of data rather than all data

- Apply watermarking to remove stale data

- Connect to Event Hubs read and write streams

After completing this module, students will be able to:

- Process streaming data with Azure Databricks structured streaming

Microsoft Job Role-based Azure Certifications

Microsoft has aligned Azure certifications and training to job roles - focusing on Admin, Dev or Architect.

Each certification requires 2 exams and no certification has any prerequisite certification requirements.

Certification Camps has developed a comprehensive training / delivery format which focuses on learning beyond the core content accessible to any Microsoft training provider. Our program incorporates interactive demonstrations with explanations which go beyond the content of the book. Additional content, videos, labs & demonstrations are provided to expand on advanced topics - providing additional insight and perspective. Certification Camps training is not the typical book & PowerPoint presentation found at any local training center.

As a Microsoft Certified Partner with Gold Learning Competency - we adhere to the strict guidelines, standards and requirements to use Microsoft's exclusive curriculum. More over - our standards go beyond the "minimum requirements" set forth by Microsoft Learning.

We leverage our partnership benefits of courseware customization to build end to end technology training solutions. Students gain practical skills which can be implemented immediately.

At most training centers - learning starts on the first day of class and ends on the last day. Our boot camp training program is designed to offer resources before, during and after.

CERTIFICATION CAMPS FACILITIES

CAMPUS - Certification Camps built out a stand alone training center (not a hotel conference room) with spacious classrooms, new desk, Herman Miller Aeron chairs & comfortable common areas. Each student has a dedicated desk with two monitors. Each classroom has a maximum of two rows - so everyone is able to be engaged without the "back row" feeling.

CLASSROOM EQUIPMENT - Students work on a dedicated Dell Client Desktop with 32GB memory with 512GB SSD drives - All Labs are executed the extremely fast Microsoft Data Center Hosted Lab Environment .

CAMPUS INTERNET - The campus is connected with a 1Gbps (1,000 Mbps) Verizon Fios Business Connection which provides complete internet (including VPN) access for students.

COMMON AREA - Amenties including snacks, drinks (Coffee, 100% juices, sodas, etc) all complimentary.

LODGING - We use the Hyatt Place Lakewood Ranch. This "upgraded" hotel offers extremely comfortable beds, great breakfast and very fast internet access.

NEAR BY - Many shops, restaurants and grocery options are available within walking distance. Additionally - the hotel provided scheduled shuttle services. Restaurants like Cheesecake Factory, California Pizza Kitchen, Panera Bread, Bone Fish Grill, Ruby Tuesday's, Five Guys, Chipotle, Chili's and over 20 additional choices in the immediate area.